| Version 5 (modified by , 7 weeks ago) ( diff ) |

|---|

Offline Mode: Initializing the parallelization for PDAF

Offline Mode: Implementation Guide

- Main page

- Initializing the parallelization

- Initializing PDAF

- Implementing the analysis step

- Memory and timing information

Contents of this page

| This page describes the initialization of the parallelization for PDAF in PDAF V3.1. Implementations with PDAF V3.0 and before used a different scheme. See the page on init_parallel_pdaf in PDAF2.3 for information on the previous initialization scheme. |

Overview

The PDAF release provides example code for the offline mode in tutorial/offline_2D_parallel. We refer to this code to use it as a basis.

The initialization of the parallelization is done by the subroutine init_parallel_pdaf. If one uses the tutorial implementation tutorial/offline_2D_parallel, or the template code in templates/offline as the basis for the implementation, one can simply use this routine without changes and directly proceed to the initialization of PDAF for the offline mode.

|

For completeness, we describe here the PDAF's approach for the parallelization.

Background: Two MPI communicators

Like many numerical models, PDAF uses the MPI standard for the parallelization. PDAF requires for the compilation that an MPI library is available. In any case, it is necessary to execute the routine init_parallel_pdaf as described below.

MPI uses so-called 'communicators' to define sets of parallel processes. In the offline mode, the communicators of the PDAF parallelization are only used internally to PDAF. However, the communicators needs to be initialized by PDAF, and are partily also used in the user code. For this, we describe them here.

The routine PDAF3_init_parallel that is called in init_parallel_pdaf is used for both the online and offline coupled modes. Because of this, it returns two communicators. They define the groups of processes that are involved in different tasks of the data assimilation system. These are

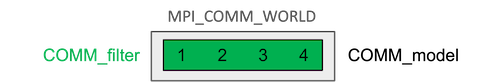

COMM_filter- defines the processes that perform the filter analysis stepCOMM_model- defines the processes that are involved in the model integrations

For the offline mode, only COMM_filter is relevant, and COMM_model is here identical to COMM_filter. Partly the tutorial source codes use both, with using COMM_filter and related variables in the call-back routines, and COMM_model in the main part of the code, in particular in initialize.F90, which defines the model grid dimensions.

The parallel region of an MPI parallel program is initialized by calling MPI_init (which is called inside PDAF3_init_parallel). By calling MPI_init, the communicator MPI_COMM_WORLD is initialized. This communicator is pre-defined by MPI to contain all processes of the MPI-parallel program. In the offline mode, it would be sufficient to conduct all parallel communication using only MPI_COMM_WORLD. However, as PDAF uses internally the communicators listed above, so they have to be initialized. However, they include the same processes MPI_COMM_WORLD in the offline mode.

Figure 1: Example of a typical configuration of the communicators for the offline coupled assimilation with parallelization. We have 4 processes in this example. The communicators COMM_model, COMM_filter are initialized. COMM_model and COMM_filter use all processes (as MPI_COMM_WORLD). Thus, the configuration of the communicators is simpler than in case of the parallelization for the online coupling.

Initializing the parallelization

The routine init_parallel_pdaf, which is supplied in templates/offline and tutorial/offline_2D_parallel, contains all necessary functionality for the initialization of the communicators. The actual initialization of MPI and of the communicators in performed in the call to the routine PDAF3_init_parallel (for details see the documentation on PDAF3_init_parallel).

init_parallel_pdaf is called at the beginning of the assimilation program. The provided file template/offline/init_parallel_pdaf.F90 is a template implementation. It should not be necessary to modify this file.

Note: In implementations done with PDAF V3.0 and before, the actual communicators are generated in init_parallel_pdaf. In PDAF V3.0, the parallelization variables are then provided to PDAF by a call to PDAF3_set_parallel. Implementations with PDAF V2.x usually not include this call, but provide the variables to PDAF in the call to PDAF_init. See the page on init_parallel_pdaf in PDAF2 for information on the previous initialization scheme.

|

Arguments of init_parallel_pdaf

In the template implementation the routine init_parallel_pdaf has one argument:

SUBROUTINE init_parallel_pdaf(screen)

screen: An integer defining whether information output is displayed. The following choices are available:- 0: quite mode - no information is displayed.

- 1: Display standard information about the configuration of the processes (recommended)

- 2: Display detailed information for debugging

Testing the assimilation program

One can compile the template code and run it, e.g., with mpirun -np 4 PDAF_offline. If you set screen=1 you will see close to the beginning of the output the lines

PDAF MPI-initialization by PDAF PDAF *** Initialize MPI communicators for assimilation with PDAF *** PDAF Pconf Process configuration: PDAF Pconf world assim model couple assimPE PDAF Pconf rank rank task rank task rank T/F PDAF Pconf ------------------------------------------------------------ PDAF Pconf 0 0 1 0 1 0 T PDAF Pconf 2 2 1 2 3 0 T PDAF Pconf 1 1 1 1 2 0 T PDAF Pconf 3 3 1 3 4 0 T

These lines show the configuration of the communicators. Here, 'world rank' is the value of 'mype_world', and 'filter rank' is the value of 'mype_filter'. These ranks always start with 0.

In the tutorial code in tutorial/offline_2D_parallel we have set screen=0 in main_offline.F90. Thus, the lines above will only be shown if you modify the value of screen. Generally, seeing the communicator setup is not really important for the offline mode.